The only problem is the manifestation of this law and the ‘how’ part of it. It is true for life as well, because life exists and the universe’s entropy is increasing. The law, of course, is deduced from extensive experiments and painstaking work by scientists and engineers and is true in every system we can observe. X2>x1, which implies, according to our equation, that entropy after the partition was removed is more than when the blue and orange gas molecules were separated.Īnd, clearly, the order that existed with the molecules separated has been lost. Since their total number (orange + blue) and the volume they occupy have increased (V/2+V/2=V), the total number of ways the particles can now exist with one another has also increased to, say, x2. In a similar way as above, the particles have many different ways of existing together. Gases, being as wiggly as they are, mix up. Suppose that we know the total number of their configurations (we don’t need to consider the number of microstates accompanying each of them for present purposes). There can be many such arrangements, their number increasing with the number of molecules. In one half, 10 orange molecules could be in one corner, the rest in the others. Right now, the particles have a certain number of ways in which they can exist, say x1.

Let one half contain blue gas molecules and the other, orange gas molecules. N1, n2… denote the numbers of particles existing in different parts of the system, where n1+n2+….+nn=N.Ĭonsider a cuboid, divided into two halves by a removable partition. W is the multiplicity of the configuration (the number of microstates that correspond to it),įor our purposes, it suffices to consider W to be a measure of the probability of a certain configuration of a system of particles (that is, the number of ways that the state can be achieved or exists). Let’s instead dive into one particular definition of it and how it leads to natural processes being as they are.įor us, entropy is a measure of the disorder in a system, and mathematically S=k ln(W) for a given configuration, where: The question of what entropy is, is difficult to answer to everyone’s satisfaction, so let’s not dwell on that.

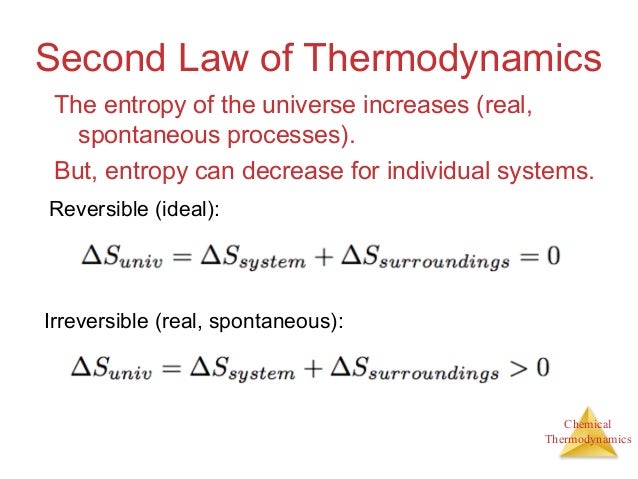

It defines the arrow of time.Įntropy change has a clean formula for the Carnot engine and finds many applications, principally those determining the efficiency of engines and processes. It is the measure of the information in a system. It is the measure of disorder in a system.

And we know it contains its own definition of the second law of thermodynamics. We know its change is zero over a reversible cycle. One of the most fascinating of these topics is entropy.Įntropy has numerous definitions, and each one of us likes to think of it in our own way. The father of thermodynamics is, after all, a brilliant engineer, Nicolas Léonard Sadi Carnot.Īt the same time, thermodynamics intrigues many because of its abstractness, the many laws it has, and how much they reveal and obscure about our world. We know the laws and engineers apply them. Thermodynamics is known to be one of the most ‘physical’ (in the sense of its many varied and important applications) and one of the most abstract of all scientific disciplines. This equation, and law, are familiar to every physics student and contain the crux of thermodynamics, a vast field with a large number of physical and theoretical applications.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed